|

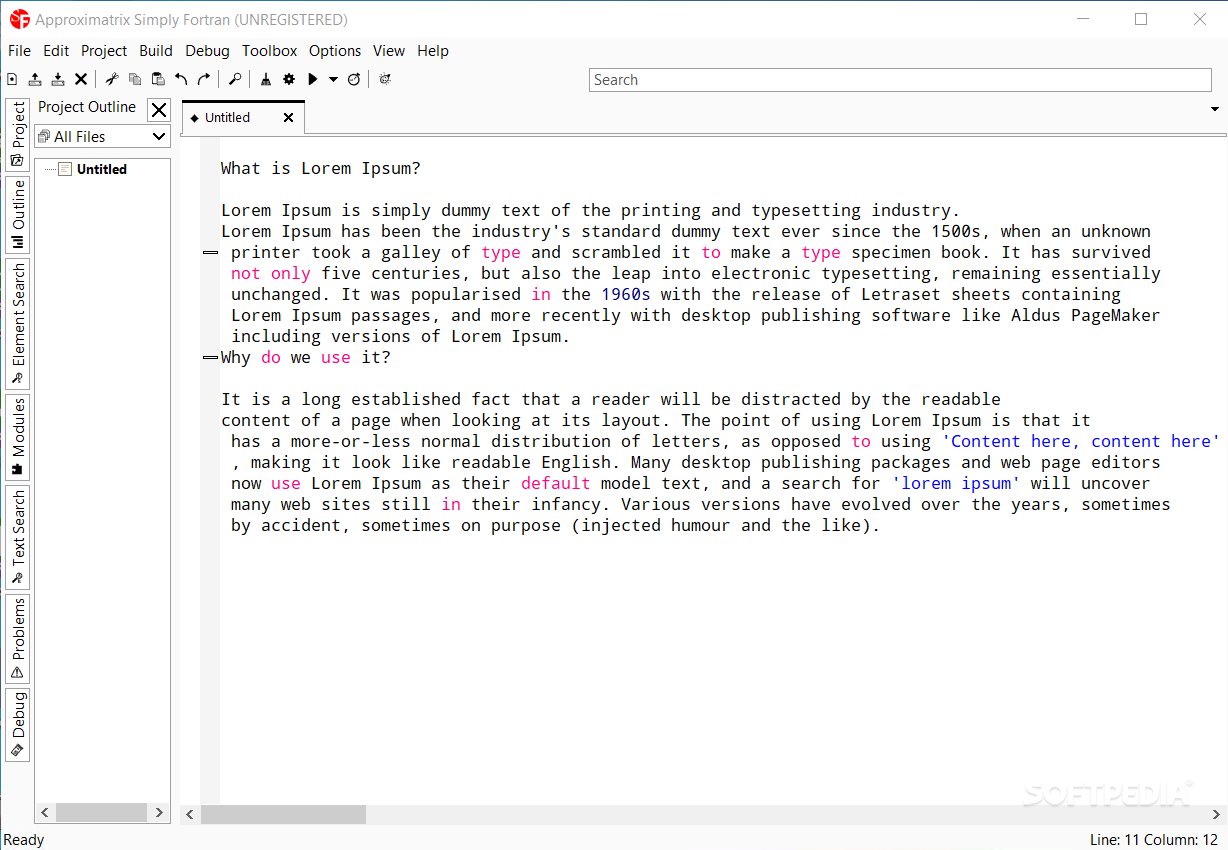

The chunks of code that are parallelized should be This illustrates the earlier point about the overhead of spawning N (even 100000 in my case) the parallel code is actually slower. program mc1 implicit none integer, parameter :: nmc = 100 ! number of trials integer, parameter :: n = 100000 ! sample size real, dimension ( nmc ) :: mu ! result of each trial real :: mean, stdev ! mean and standard deviation integer :: j !$OMP PARALLEL DO do j = 1, nmc print *, 'Experiment', j mu ( j ) = monte_carlo ( n ) end do !$OMP END PARALLEL DO mean = sum ( mu ) / dble ( nmc ) stdev = sqrt ( sum ( ( mu - mean )** 2 ) ) / dble ( nmc ) print *, 'mean', mean print *, 'stdev', stdev contains ! Draws n Uniform(0,1) random numbers and returns the sample mean function monte_carlo ( n ) result ( y ) integer, intent ( in ) :: n integer :: i real :: y, u y = 0.d0 do i = 1, n call random_number ( u ) ! draw from Uniform(0,1) y = y + u ! sum the draws end do y = y / dble ( n ) ! return the sample mean end function monte_carlo end program mc1ĭepending on your computer, because of the simplicity of thisĮxperiment you will probably need to set n very high in order to see In the exampleīelow, monte_carlo(n) draws n Uniform(0,1) random numbers and We would like to compute the mean of these results. Suppose we have a function called monte_carlo which we need to run Here is a short example program, mc1, to get started. In any order so that summary statistics such as the mean and standardĭeviation can be calculated. At the end, all of the trials simply need to be collected Monte CarloĮxperiments are good examples of this. Times and each task is independent of the others. That is, a group of task need to be carried out multiple In many cases you will have sections of code that are embarrassingly Might take more than a couple of seconds to compute–rather than That are each sufficiently hard to compute on their own–ones that That is, it’s ideal to split the code into distinct tasks

Starting new threads is outweighed by time required to perform the Sections of code in parallel such that the additional overhead of The trick to getting the most out of parallel processing is to run With -fopenmp and for ifort, the flag is -openmp. Parallel programs is to add some OpenMP directives, speciallyįormatted comments, to your code and compile the program with a Both the GNU and Intel Fortran compilers have native support for

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed